Marketing | 5 min read Facebook today announced that three independent studies have found that the company's efforts to fight the spread of false news on its site might be working. Here's what each study found, and how it compares to what users report seeing on their News Feeds. Three Studies of Facebook's Fight Against False News The first study -- conducted by New York University's Hunt Allcott, along with Stanford University's Matthew Gentzkow, and Chuan Yu -- observed the amount of engagement on Facebook and Twitter with content from 570 publishers that had been labeled as "false news," according to earlier studies and reports. The team them used content sharing and tracking platform BuzzSumo to measure how much engagement -- shares, comments, and such reactions as Likes -- was received by all stories published by these sites between January 2015 and July 2018 on Facebook and Twitter. "Iffy Quotient: A Platform Health Metric for Misinformation" The second study, conducted by researchers at the University of Michigan, relied a measure of false news engagement referred to as the "Iffy Quotient" -- which takes into account how much content from sites known for publishing misinformation is "amplified" on social media. What Do Users Report Seeing on Facebook? While the above three studies point to the possible success of Facebook's efforts to curb the spread of false news and misinformation, the group of users we surveyed might not yet be seeing the impact of Facebook’s anti-spam measures. Over half of respondents report seeing more spam in their News Feeds over the past six months: a figure up from the 47% who reported seeing more spam in their feeds in July 2018, when we ran a preliminary survey. These combined findings raise a question: If independent research, which Facebook says it did not fund, points to such success in its efforts to curb the spread of false news, why does a growing number of users report seeing more of it in the News Feed? Consider, too, that our research also shows that about a third of internet users don't believe that Facebook's efforts to prevent election meddling will work at all.

Facebook today announced that three independent studies have found that the company’s efforts to fight the spread of false news on its site might be working.

The three studies — conducted respectively by New York University and Stanford University researchers, the University of Michigan, and French fact-checking organization Les Décodeurs — each found that the volume of false news on Facebook has decreased. Some found that, amongthe false news content present on the site, engagement with it had also gone down.

We recently ran a survey to see if users were noticing less spam on the social network, and despite today’s announcement, it seems like misinformation might not be totally eradicated just yet.

Here’s what each study found, and how it compares to what users report seeing on their News Feeds.

Three Studies of Facebook’s Fight Against False News

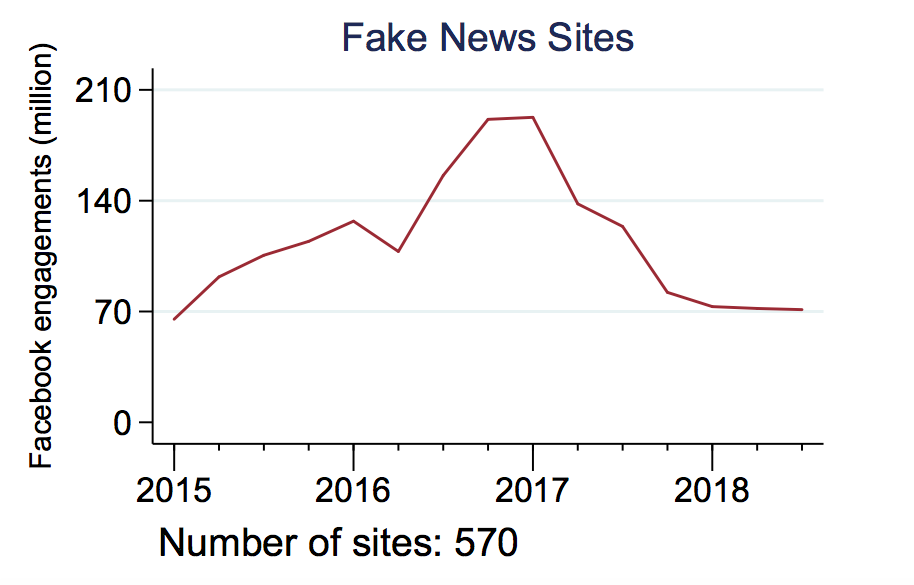

The first study — conducted by New York University’s Hunt Allcott, along with Stanford University’s Matthew Gentzkow, and Chuan Yu — observed the amount of engagement on Facebook and Twitter with content from 570 publishers that had been labeled as “false news,” according to earlier studies and reports. And while the study cites where it obtained this list of 570 sites, it doesn’t actually indicate what they are.

The team them used content sharing and tracking platform BuzzSumo to measure how much engagement — shares, comments, and such reactions as Likes — was received by all stories published by these sites between January 2015 and July 2018 on Facebook and Twitter.

The results: Following November 2016, interactions with this content fell by over 50% on Facebook. The study also indicated, however that shares of this content on Twitter increased.

It’s important to note that a U.S. presidential election took place in November 2016, for which Facebook was weaponized by foreign actors in a misinformation campaign with the intention of influencing the election’s outcome.

Since then, Facebook has widely publicized its fight against the spread of such misinformation — which includes false news — and points to this study as evidence of that fight’s success.

“Iffy Quotient: A Platform Health Metric for Misinformation”

The second study, conducted by researchers at the University of Michigan, relied a measure of false news engagement referred to as the “Iffy Quotient” — which takes into account how much content from sites known for publishing misinformation is “amplified” on social media.

Why such a non-committal word, like “iffy”? According to the study, the name is a tribute to the often mixed, subjective definitions of what constitutes “false news.” In this case, it includes “sites that have frequently published misinformation and hoaxes in the past,” as measured by such fact-checking bodies as Media Bias/Fact Check and Open Sources.

This study largely utilized NewsWhip:…

COMMENTS