Author: Tom Smith / Source: SearchEngineWatch Technical SEO has certainly fallen out of fashion somewhat with the rise of content marketi

Technical SEO has certainly fallen out of fashion somewhat with the rise of content marketing, and rightly so.

Content marketing engages and delivers real value to the users, and can put you on the map, putting your brand in front of far more eyeballs than fixing a canonical tag ever could.

While content is at the heart of everything we do, there is a danger that ignoring a site’s technical set-up and diving straight into content creation will not deliver the required returns. Failure to properly audit and resolve technical concerns can disconnect your content efforts from the benefits it should be bringing to your website.

The following eight issues need to be considered before committing to any major campaign:

1. Not hosting valuable content on the main site

For whatever reason, websites often choose to host their best content off the main website, either in subdomains or separate sites altogether. Normally this is because it is deemed easier from a development perspective. The problem with this? It’s simple.

If content is not in your main site’s directory, Google won’t treat it as part of your main site. Any links acquired on subdomains will not be passed to the main site in the same way as if it was in a directory on the site.

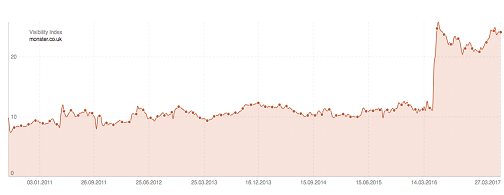

Sistrix posted this great case study on the job site Monster, who recently migrated two subdomains into their main site and saw an uplift of 116% visibility in the UK. The chart speaks for itself:

We recently worked with a client who came to us with a thousand referring domains pointing towards a blog subdomain. This represented one third of their total referring domains. Can you imagine how much time and effort it would take to build one thousand referring domains?

The cost of migrating content back into the main site is miniscule in comparison to earning links from one thousand referring domains, so the business case was simple, and the client saw a sizeable boost from this.

2. Not making use of internal links

The best way to get Google to spider your content and pass equity between sections of the website is through internal links.

I like to look at a website’s link equity as a heat which flows through the site through its internal links. Some pages are linked to more liberally and so are really hot; other pages are pretty cold, only getting heat from other sections of the site. Google will struggle to find and rank these cold pages, which massively limits their effectiveness.

Let’s say you’ve created an awesome bit of functional content around one of the key pain points your customers experience. There’s loads of search volume in Google and your site already has a decent amount of authority so you expect to gain visibility for this immediately, but you publish the content and nothing happens!

You’ve hosted your content in some cold directory miles away from anything that is regularly getting visits and it’s suffering as a result.

This works both ways, of course. Say you have a page with lots of external links pointing to it, but no outbound internal links – this page will be red hot, but it’s hoarding the equity that could be used elsewhere on the site.

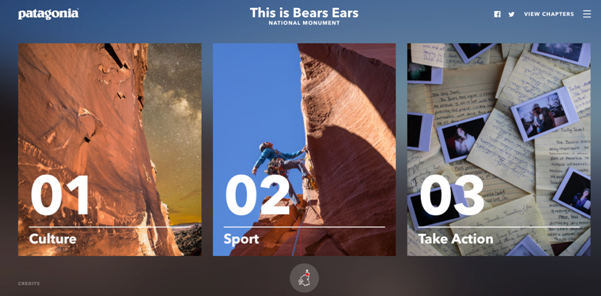

Check out this awesome bit of content created about Bears Ears national park:

Except they’ve only got a single link back to the main site, and it is buried in the credits at the bottom of the page. Why couldn’t they have made the logo a link back to the main site?

You’re probably going to have lots of pages on content which are great magnets for links, but what is more than likely is that these are probably not your key commercial pages. You want to ensure relevant links are included between hot pages and key pages.

One final example of this is the failure to break up paginated content with category or tag pages. At Zazzle Media we’ve got a massive blog section which, at the time of writing, has 49 pages of paginated content! Link equity is not going to be passed through 49 paginated pages to historic blog posts.

To get around this we included links to our blog posts from our author pages which are linked to from a page in the main navigation:

Another way around this would be with the additional tag or category pages for the blog – just make sure these pages do not cannibalize other sections of the site!

3. Poor crawl efficiency

Crawl efficiency is a massive issue we see all the time, especially with larger sites. Essentially Google only has a limited amount of pages it will crawl on your site at any one time. Once it has exhausted its budget it will move on and return at a later date.

If your website has an unreasonably large amount of URLs then Google may get stuck crawling unimportant areas of your website, while failing to index new content quickly enough.

The most common cause of this is an unreasonably large number of query parameters being crawlable.

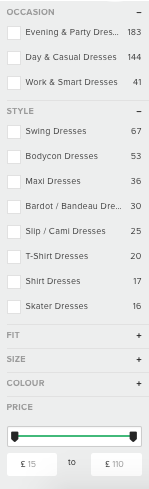

You might see the following parameters working on your website:

https://www.example.com/dresses

https://www.example.com/dresses?category=maxi

https://www.example.com/dresses?category=maxi&colour=blue

https://www.example.com/dresses?category=maxi&size=8&colour=blue

Functioning parameters are rarely search friendly. Creating hundreds of variations of a single URL for engines to crawl individually is one big crawl budget black hole.

River Island’s faceted navigation creates a unique parameter for every combination of buttons you can click:

This creates thousands of different URLs for each…

COMMENTS