Instead, experiments are designed to answer questions. And in this post, we'll explain some fundamentals of what experiments are, why we conduct them, and how answering questions can lead to improved metrics. What Is an Experiment? As marketers, we do not run experiments to improve metrics. That often happens when we don’t thoroughly answer the following questions prior to that build: Do we know who is finding a particular page? The hypothesis: By modifying the value proposition of the product details pages, we’ll be able to observe any difference in purchase rate between them and build better product pages with higher conversion rates. Then, we need to establish the control of our experiment: the untouched, unmodified, pre-existing assumption that the value proposition of product pages should be activity-focused. Wowza -- you just learned that most people do not visit this page to purchase the boot for hiking. When nothing changes at all, I like to always take one step back, and ask a few clarifying questions: Did different buyer segments show contrasting behavior on different variants? And while that means going back to the drawing board and redesigning your experiment (and its variables), use the answers to the above questions to guide you.

Sometimes, I think that we here at HubSpot are just a bunch of mad scientists.

We love to run experiments. We love to throw bold ideas at the wall to see if they stick, tinkering with different factors, and seeing how what happens can be incorporated into what we do every day. To us, it’s a very hot topic — we’re writing about it whenever we can, and trying to lift the curtain on what, behind the scenes, we’re cooking up on our own marketing team.

But we’re going to let you in on yet another secret: Experiments are not designed to improve metrics.

Instead, experiments are designed to answer questions. And in this post, we’ll explain some fundamentals of what experiments are, why we conduct them, and how answering questions can lead to improved metrics.

What Is an Experiment?

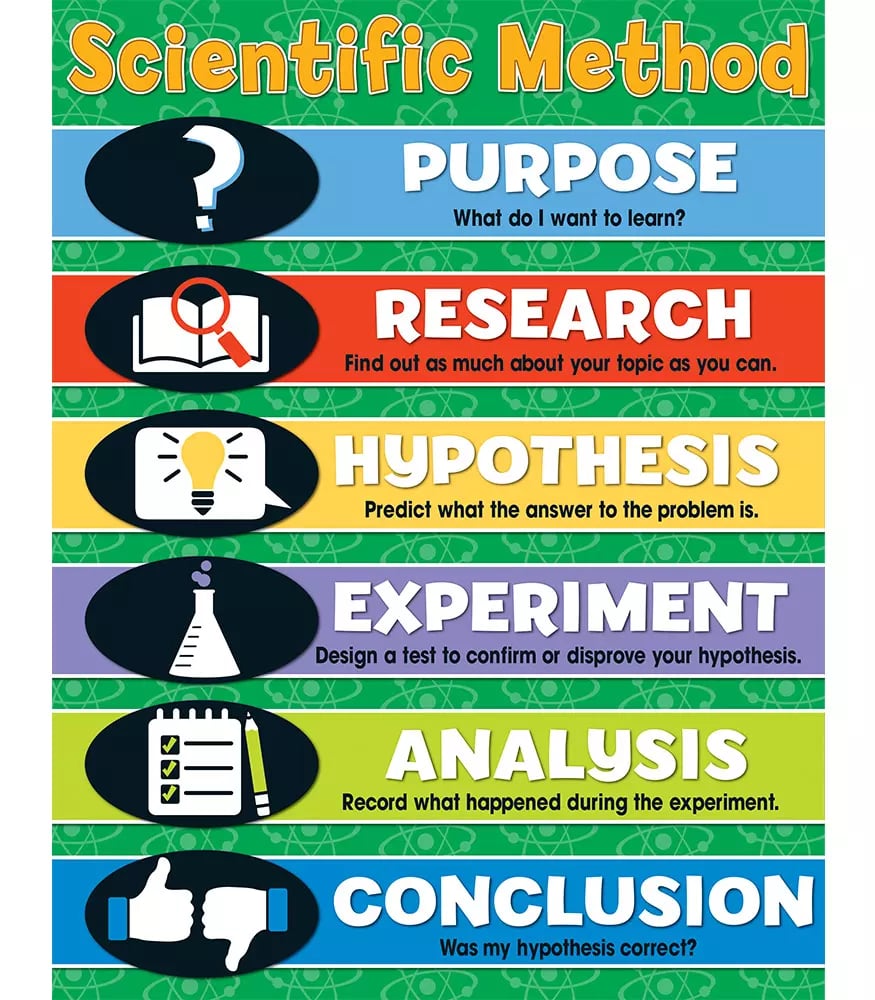

In a forward-thinking marketing environment, it’s easy to forget why we run experiments in the first place, and what they fundamentally are. That’s why we like referring to the term design of experiments, which refers to “a systematic method to determine the relationship between factors affecting a process and the output of that process.” So, much like the overall point of conducting experiments in the first place, this method is used to discover cause-and-effect relationships.

To us, that informs a lot of the decision-making process behind experiments — especially whether or not to conduct it in the first place. In other words, what are we trying to learn, and why?

Experiments Are Quantitative User Research

From what I’ve seen around the web, there seems to be a bit of a misconception around experimentation — so please, allow me to set the record straight. As marketers, we do not run experiments to improve metrics. That type of thinking actually demonstrates a fundamental misunderstanding of what experiments are, and how the scientific method works.

Instead, marketers should run experiments to gather behavioral data from users, to help answer questions about who these users are and how they interact with your website. Prior to running a given experiment, there may have been some misinformed assumptions about users. These answers challenge those assumptions, and provide better context to how people are using your online presence.

That’s one thing that makes experimentation such a learning-centric process: It forces marketers to acknowledge that we might not know as much as we’d like to think we do about how our tools are being used.

But if it’s your job to improve metrics, fear not — while the purpose of experimentation isn’t necessarily to accomplish that goal, it still has the potential to do so. The key to unlocking improved metrics is often the knowledge gained through research. To shed light on this, let’s take a closer look at assumptions.

Addressing Assumptions

In reality, many marketers build an online presence based on what I call a “pyramid of assumptions.” That often happens when we don’t thoroughly answer the following questions prior to that build:

- Do we know who is finding a particular page?

- Do we know why they are visiting a particular page?

- Do we know what they were doing before visiting a particular page?

When you think about it, it’s kind of a looney concept to build online assets without these answers. But hey — you’ve got things to do. There are about a hundred more pages that you need to build after this one. Oh, and there’s that redesign that you need to get to. In other words, we understand why marketers take these shortcuts and make these assumptions when facing the pressure of a deadline. But there are consequences.

If those initial, core assumptions are wrong, conversions might be left on the table — and that’s where experimentation serves as a potential opportunity to improve metrics. (See? We wouldn’t leave you hanging.) The data you collect from experiments helps you answer questions — and those answers give you context…

COMMENTS