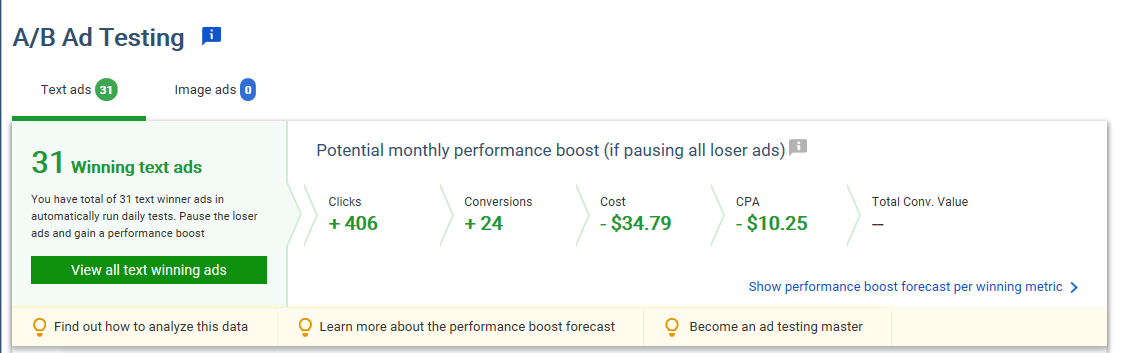

If your ad test, for example, were set up to test the hypothesis that a dollar discount is more effective than a percentage, your results would provide a powerful advantage. Automated Testing I’ve long used Excel pivot tables to aggregate data and determine winning ad variations or even phrases. AdWords itself has offered campaign settings that “optimize” ad rotation. Intuition Required Relying on Google to choose the best ad creates a potential conflict, as Google presumably wants to maximize its ad revenue. I mainly use Adalysis to confirm my intuition on ad copy — especially on analyzing the “why” behind a winning ad — and to automate the testing part. The tool looks at the copy in each ad group and notifies me when a test has reached statistical significance. Clicking on a specific ad group shows you which ad is winning and also includes your history of ad tests, so you don’t repeat them. Human Element For many advertisers, it’s too early to rely on Google for ad testing. A testing program requires intuition, even if Google has enough data to work with, which is not always the case. Keep the human element in the process, but use tools such as Adalysis to improve efficiency.

My first step in setting up a new AdWords campaign is to test the ad copy. AdWords has long recommended running three or more ads in each ad group. It’s an industry practice to test new messaging.

The most common protocol for testing ad copy in a single ad group follows the following pattern.

- Write two-to-three ad variations to start — it’s best if there is a basic hypothesis behind each ad.

- Allow enough time and data to accrue for a statistically significant winner to emerge.

- Pause underperforming ads to have one “champion.”

- Analyze why the champion won and why the underperformers did not.

- Write one-to-two new “challenger” ads — again, testing a hypothesis.

- Repeat the process.

The main benefit of a testing process is improved performance in key indicators, such as a higher click-through rate, a higher conversion rate, or a larger average purchase.

A solid testing regimen should keep KPIs progressing upward or at least keep them from decreasing. Constant growth is more-or-less impossible, especially in competitive niches where others can see your ad copy and imitate your efforts.

Another, often unrealized, benefit, is the insight you receive about your target customers. If your ad test, for example, were set up to test the hypothesis that a dollar discount is more effective than a percentage, your results would provide a powerful advantage. If the hypothesis validates, you could start offering dollar-off discounts in your email marketing and with promotions on social media, confident that it is more effective than a percent-off discount.

Automated Testing

I’ve long used Excel pivot tables to aggregate data and determine winning ad variations or even phrases. That process can become laborious. So it has become a target of various automation efforts.

AdWords itself has offered campaign settings that “optimize” ad rotation. While there used to be two options — optimize for clicks or conversions — there is now just one choice to “optimize.” (I addressed it in September, in “AdWords Changes Coming Fall 2017.”) This new “optimize” feature is AdWords’ poor-man version of automating ad testing.

And it appears AdWords is doubling down.

In a recent interview on Search Engine Land, Matt Lawson, director of performance ads marketing at Google, spoke with Nicolas…

COMMENTS